First contact

The cortex hadn't gone quiet. BrainGate crossed from monkeys to a human. EEG built the parallel infrastructure. Post 1 of 14 in How BCI got here (2003-2010, The foundations).

On June 22, 2004, a 25-year-old man named Matthew Nagle had 96 electrodes pressed into his motor cortex and, within weeks, moved a cursor across a screen using only his thoughts. The public saw a paralyzed man playing Pong with his mind. What the field saw was something more specific: proof that cortical motor neurons survive years of disuse, remain tuned to intended movement, and can be decoded in real time.

In this post, I’m tracing how BCI got from primate labs to the first human clinical trial. That transition, from “it works in monkeys” to “it works in a person”, took most of the early 2000s, and it required a particular convergence of electrode hardware, decoding software, FDA approval, and one extraordinary patient willing to test all of it. I’ll also cover the parallel non-invasive track running alongside intracortical work, because the two paths shaped each other in ways that still matter.

This is the research spine underneath this series: bci0.neural-noise.xyz. The yearly reviews from 2003–2006 are where I pulled the specific dates and papers below.

The primate foundation

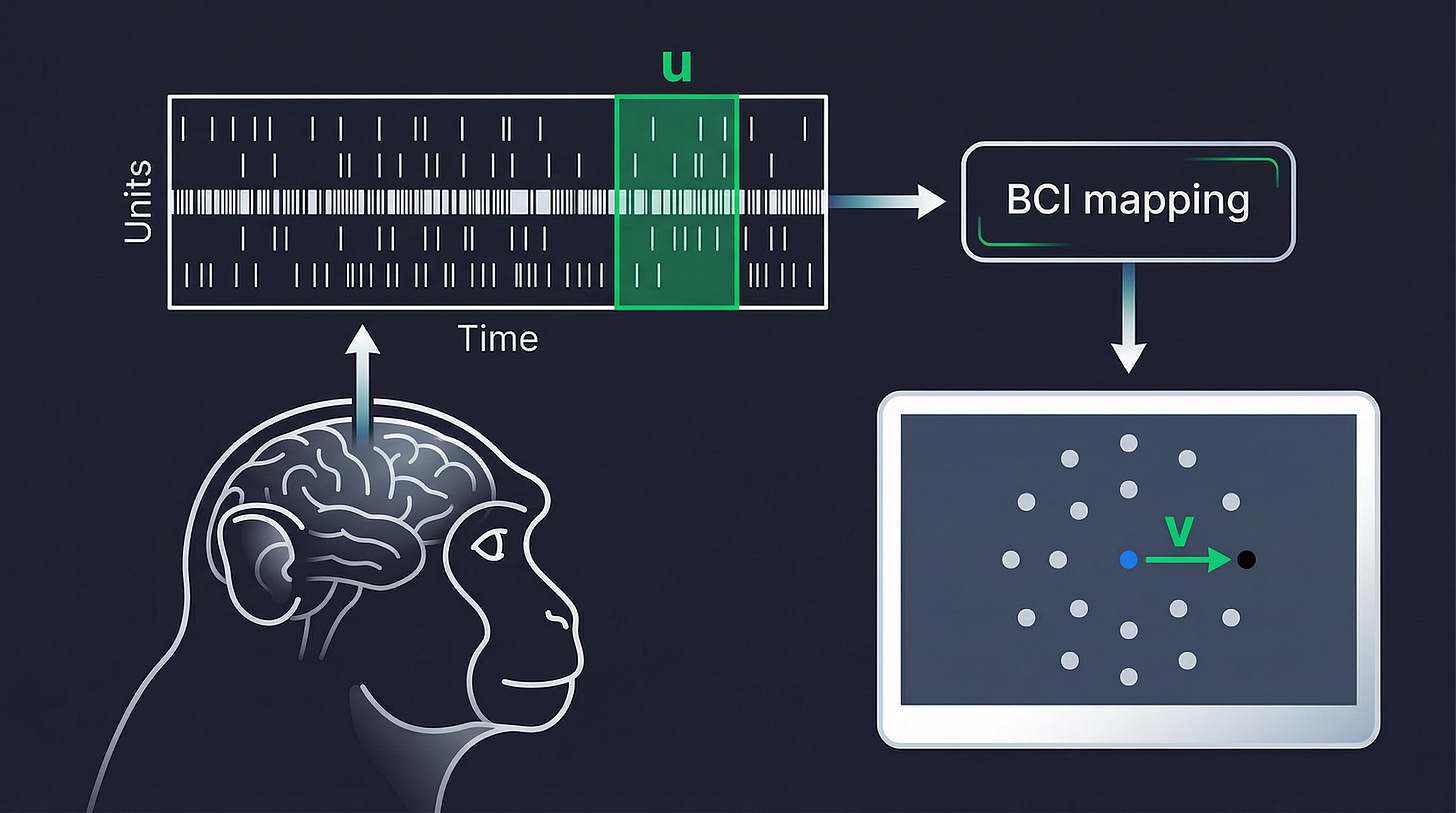

Before there was a human trial, there were over a decade of experiments in monkeys. By 2003, three labs - Miguel Nicolelis at Duke, John Donoghue at Brown, and Andrew Schwartz at Pittsburgh - had spent years characterizing how populations of motor cortex neurons encoded intended limb movements. The culminating paper of that primate era came in October 2003: Carmena et al. in PLOS Biology, showing that rhesus monkeys with multi-electrode arrays spanning motor cortex, premotor cortex, somatosensory cortex, and posterior parietal cortex could control a robot arm to reach and grasp. The signals from this array of sensors could be used to decode position, velocity, and gripping force simultaneously, in real time, in closed loop.

That wasn’t just a technical step. It demonstrated that:

the motor cortex carried enough information for multi-parameter control

that monkeys could learn to use this feedback

and that cortical reorganization accompanied that learning.

This set the benchmark that human systems would have to approach.

Cyberkinetics Neurotechnology Systems, the Brown University spin-off co-founded by Donoghue and colleagues in 2001, spent 2003 in active FDA discussions, building the case for an Investigational Device Exemption. Their device: the Utah Microelectrode Array1 had been tested in 22 monkeys. The question was whether it would work in a human brain that had been injured and deprived of movement for years.

Matthew Nagle and the BrainGate pilot

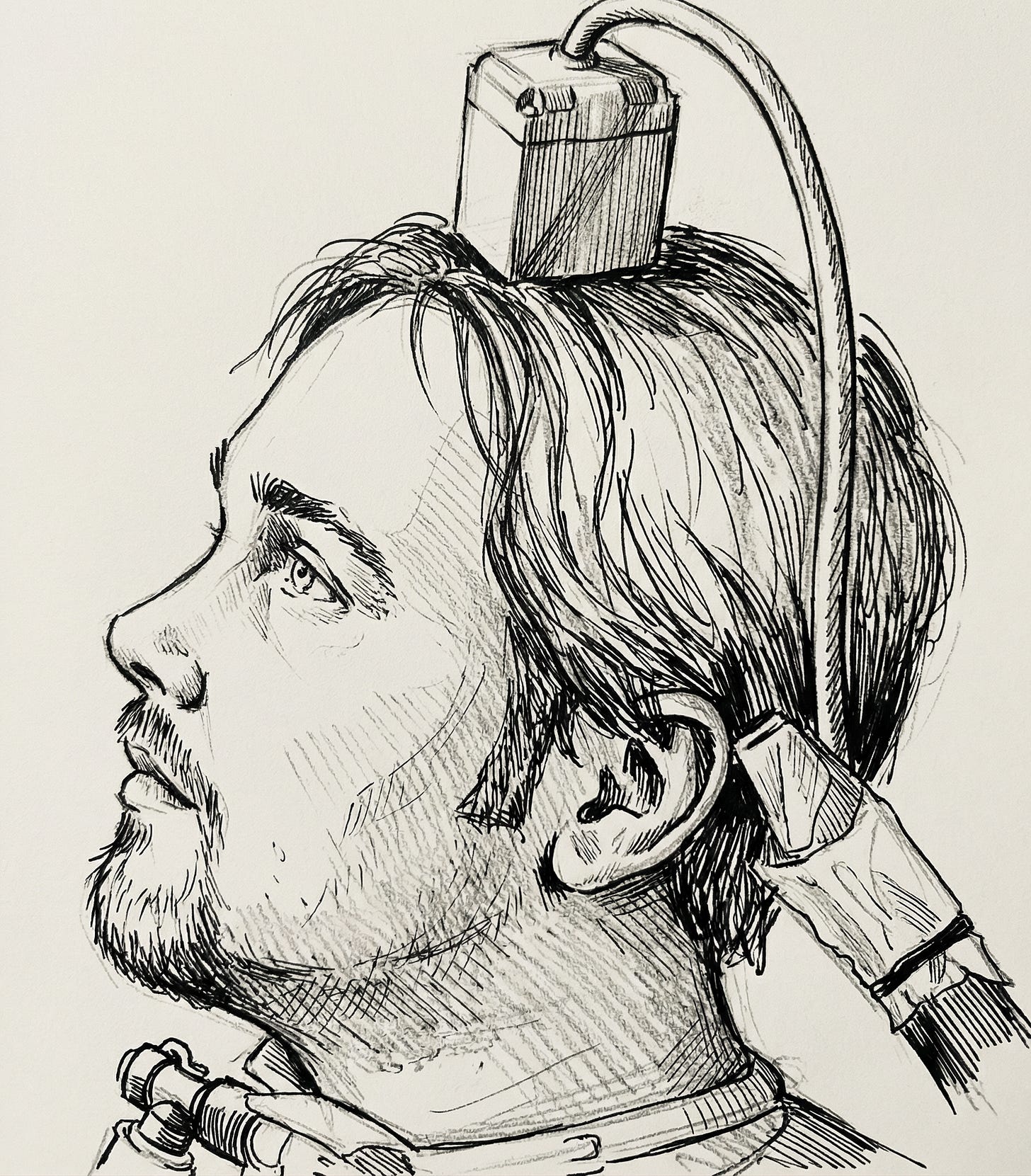

On June 22, 2004, neurosurgeon Gerhard Friehs implanted the 96-electrode array into the hand and arm region of Matthew Nagle’s right motor cortex at Rhode Island Hospital in Providence. Nagle was 25 years old, paralyzed from the neck down after a stabbing injury in 2001. The FDA IDE had been confirmed in April.

Six weeks after surgery, calibration began. Nagle was asked to imagine moving his hand in different directions. The decoder, running on software adapted from BCI20002, immediately found modulated neural activity. Nagle reportedly said “Holy shit!” when he saw the cursor respond.

Over 57 sessions at New England Sinai Hospital (July 2004 through April 2005), he learned to open simulated email, change TV channels, draw circular shapes in a paint program, operate a prosthetic hand, and play “neural Pong.” What he was doing wasn’t elegant… the system required a bulky external hardware cabinet, a trained technician, and daily recalibration. But the fundamental finding was unambiguous: motor cortex neurons in a person with years of cervical spinal cord injury retained directional tuning and temporal modulation suitable for real-time decoding. The cortex hadn’t gone quiet.

A second participant received an implant at the University of Chicago in April 2005. A third received her implant in late 2005 or early 2006. Her data would eventually show useful neural recordings more than 1,000 days post-implant, a counter-narrative to the signal degradation story that dominated the field after Nagle.

The full peer-reviewed results came in Nature on July 13, 2006. The Hochberg, Serruya, Friehs, and Donoghue paper formally introduced BrainGate to the scientific record. The same issue contained another landmark: Santhanam, Ryu, Yu, Afshar, and Shenoy at Stanford reporting 6.5 bits per second from premotor cortex plan activity in monkeys (4x improvement over prior systems), and the first time a BCI had demonstrated information rates competitive with existing assistive communication alternatives. Two papers in one issue defined the field’s dual agenda for the next decade: clinical feasibility on one side, performance on the other.

The parallel non-invasive track

While BrainGate was working through FDA approvals and clinical enrollment, a different BCI tradition was building its own infrastructure. It was more reproducible, more accessible, and in some ways more practically useful in the near term.

The Graz BCI group, led by Gert Pfurtscheller, had spent years demonstrating that imagined hand and foot movements produced distinctive patterns in scalp EEG - specifically in the mu and beta sensorimotor rhythms. By 2003, their system achieved classification accuracies up to 100% in trained subjects using just two bipolar EEG channels and linear discriminant analysis. They were already testing it in paraplegic patients with hand orthoses. The paradigm was fundamentally different from intracortical work: no surgery, no foreign body response, no FDA IDE required… but also far less spatial resolution and far lower signal fidelity.

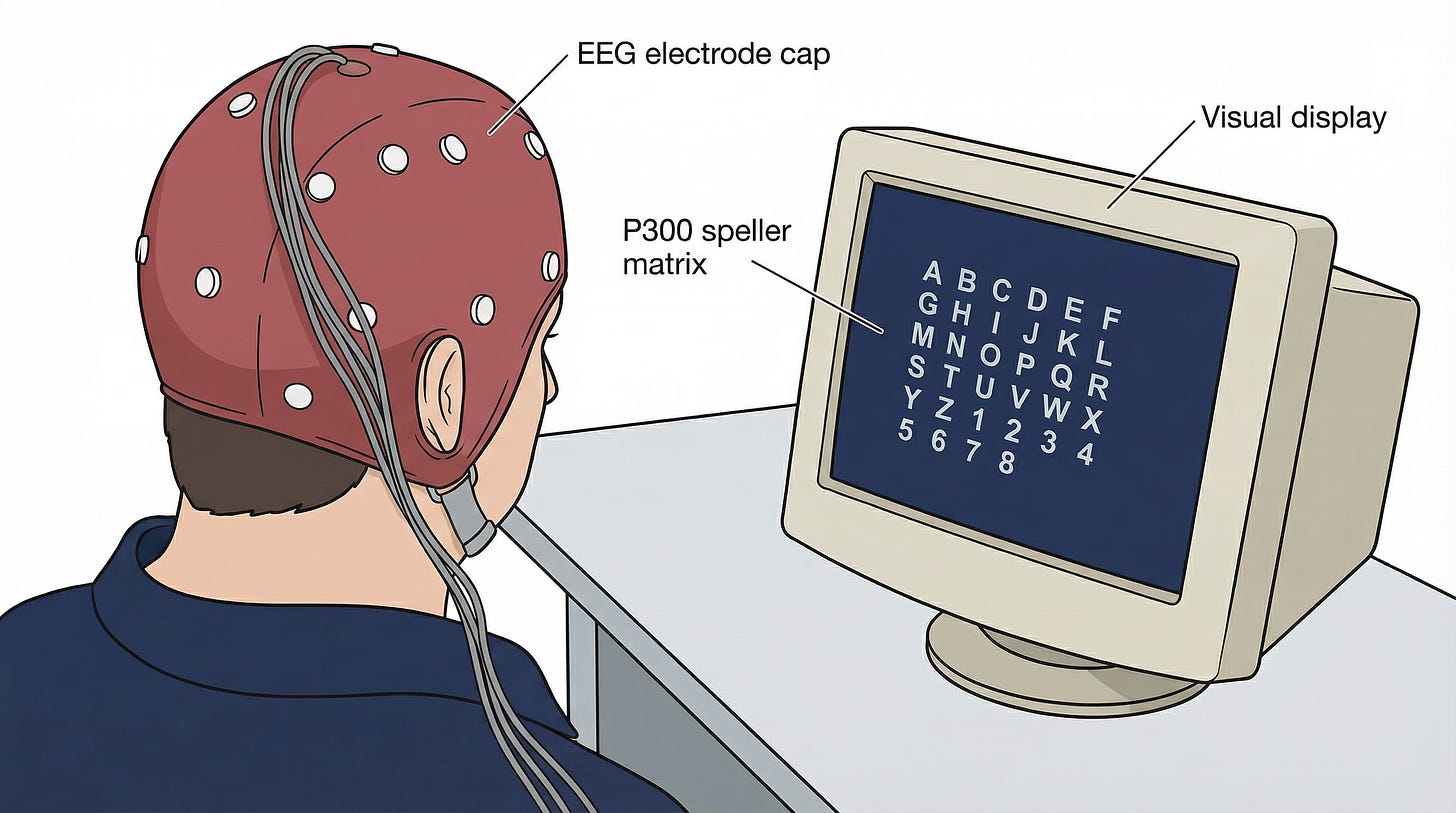

At the Wadsworth Center in Albany, Jonathan Wolpaw and Dennis McFarland were pursuing P300-based spellers and, separately, sensorimotor rhythm cursor controllers. Their December 2004 PNAS paper settled a long-standing question: EEG-based sensorimotor rhythms could support accurate two-dimensional cursor control in humans. Independent control of both horizontal and vertical dimensions (using mu rhythm for one axis, beta rhythm for the other) was possible with careful signal processing and a co-adaptive algorithm that improved in parallel with the user. This directly answered the critics who argued EEG’s resolution was inherently too limited for multi-dimensional BCI.

The field also built shared infrastructure during this period that proved essential. BCI2000, published by Schalk and colleagues at Wadsworth in IEEE Transactions on Biomedical Engineering in June 2004, standardized real-time EEG recording, feature extraction, and feedback delivery into a modular, shareable platform. By 2007 it would be running at more than 80 research centers globally. The BCI Competition 20033 created the field’s first rigorous cross-laboratory benchmark. BCI Competition III in 2005 followed, this time including ECoG data. These competitions did for BCI what ImageNet would later do for computer vision: forced the community to compete on shared ground.

Why this decade mattered

The 2003–2006 period established several things that the field would build on for the next two decades.

First: cortical motor decoding was feasible in humans, not just primates. Nagle’s trial settled that. Even a brain that had been deprived of movement feedback for three years retained motor cortex neurons capable of driving a decoder. That was not guaranteed before 2004.

Second: the academic-to-clinical-trial template was established. The path from Donoghue’s Brown lab to the Cyberkinetics IDE to Friehs’ surgery to Hochberg’s clinical trial management became the model. Every major intracortical trial since has followed a version of it.

Third: the regulatory and ethical frameworks for chronically implanted neural devices began to take shape. The BrainGate IDE, and the FDA’s willingness to grant it, created precedent. Informed consent protocols, adverse event reporting, and the definition of “clinical benefit” for a BCI were all worked out in practice during these trials.

Fourth: the funding ecosystem activated. NIH’s NINDS neural prosthetics program was supporting the major invasive labs throughout this period. DARPA was beginning to increase its investment under the Human Assisted Neural Devices program. The Society for Neuroscience 2004 meeting featured noticeably expanded BCI sessions from Schwartz, Shenoy, Andersen, and the European groups. This signaled that the field was no longer a niche within a niche.

What was still uncertain

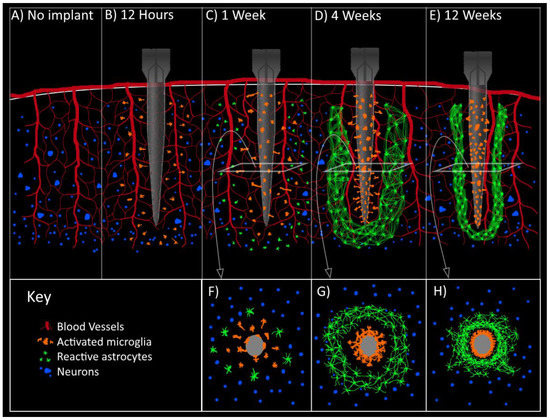

Signal longevity was the central unsolved problem. Nagle’s recordings declined significantly by month six or seven. This was due to astrocytic encapsulation, neuronal retreat from the electrode tips, the brain’s foreign-body response to rigid silicon. Groups at Michigan, Utah, and MIT were already exploring flexible polymer electrodes and anti-inflammatory surface coatings, but there were no solutions yet. The field didn’t know whether useful recordings would last six months or six years in a given patient, or why the variability was so high.

Whether cursor control would translate to functional independence was also genuinely open. Nagle’s demonstrations were striking, but the system required a technician, a hardware cabinet, and daily recalibration. It wasn’t something a person could use at home. The gap between a controlled clinical demonstration and a device someone could rely on for communication or motor control was wide, and the field was only beginning to understand how wide.

And there was no business model. Cyberkinetics was struggling to raise the $40–50 million Donoghue and CEO Timothy Surgenor estimated they needed to commercialize. By 2007, the company had largely wound down its BrainGate investment. They were unable to attract the capital a genuinely novel, high-risk medical device required before it had clear clinical outcomes data, regulatory approval, or a reimbursement pathway. The BrainGate pilot had been a scientific success and a commercial near-failure.

The invasive-versus-non-invasive question was also unresolved. Intracortical systems offered far higher signal quality but carried surgical risk and the chronic recording problem. EEG was safe and accessible but limited. And then, in 2004, a third option had appeared: Eric Leuthardt and colleagues at Washington University published the first demonstration that electrocorticographic signals (subdural electrode strips on the cortical surface, not penetrating it) could enable one-dimensional cursor control with training times of just three to twenty-four minutes, achieving success rates of 74–100%. That result opened a design space nobody had fully mapped yet.

The bridge forward

By the end of 2006, BCI had a peer-reviewed proof of principle in a human patient, two competing information-theoretic benchmarks, a global software platform, a generation of algorithms sharpened by public competitions, and a field-defining open problem in signal longevity. What it didn’t have was a second neural interface success story at scale… a device that had made it all the way through through the clinical and commercial gauntlet.

That story already existed. It just wasn’t in motor cortex. Cochlear implants had been reaching patients since the 1980s and were already in hundreds of thousands of ears worldwide. And a surface electrode approach (ECoG) was about to show that the cortex held far more signal than anyone had expected, without the penetration that caused the longevity problem. Both threads reshaped how the BCI field thought about its own future. That’s the next post.

Source material

Yearly reviews

Monthly feeds (key months)

2003-06 - early BCI methods landscape

2004-06 - around BrainGate pilot

2006-07 - Nature publication period

Topic pages

a 4×4 mm grid of 96 silicon electrodes, originally developed at the University of Utah and manufactured by Bionic Technologies (later Blackrock Microsystems)

the modular BCI platform published by G. Schalk and colleagues that same month

six publicly released datasets covering slow cortical potentials, P300, and motor imagery, drawing 99 algorithm submissions